Glacier Expedited Retrievals Are Currently Not Available Please Try Again Later

These days managing artefact lifecycles in AWS cloud mainly comprises of applying the correct policy for the objects in question, this results in server instances (using AWS lifecycle Manager ) and S3 objects (using S3 lifecycle rules) being autonomously backed upwards and retained for a configurable period of time.

This is cracking for:

- DR (disaster recovery) strategies such as fill-in & restore

- Retention (VPC menses logs to S3)

- Security inspect compliance (AWS Cloud trail logs to S3).

All the same, most of these strategies consequence in huge volumes of S3 capacity being consumed, this leads most organisations to choose to push objects into cheaper storage such as AWS S3 Glacier.

Sounds great, and it is. The toll of storage in AWS Glacier is significantly lower than AWS S3. The lifecycle rules are simple to setup and result in a self maintaining solution; however, have you always tried to become access to an object in AWS Glacier? Information technology's not quite every bit straightforward as putting them in.

What are nosotros restoring?

It sounds obvious, simply knowing which objects you need to restore is the first step. With a pocket-size list this isn't a problem – you can browse the S3 Buckets to find the objects in question (via the AWS Web Console) and restore them with a few option clicks in the GUI (well you tin make a restore-request but more virtually that later).

Merely what if y'all need to restore hundreds of objects (or in my case millions of objects)? This manual approach isn't going to be feasible – we demand a programmatic approach.

In this case the simplest arroyo was to use the AWS CLI tool (command-line interface) in a bash beat.

aws s3api list-objects-v2 \ --saucepan bucketname \ --query "Contents[?StorageClass=='GLACIER']" \ --output text \ | awk '{print $2}' > glacier-restore-object list.txt The higher up AWS CLI command pulls an entire S3 bucket contents that has transitioned into Glacier and outputs the object'due south fully qualified path to a text file (glacier-restore-object list.txt).

At this point you could modify the above script and re-add further filtration to reduce the object list to match your requirements. However, my requirements were to remember everything in Glacier from that bucket.

Restoring the objects

When AWS transitions objects to Glacier from S3 they switch into a state where you tin only come across the objects details and not the content. To get them back in a country where you lot can admission the object once more y'all have to submit a restoration request for each object.

aws s3api restore-object \ --restore-request '{ \ "Days" : 7, \ "GlacierJobParameters" : {"Tier":"Majority"} \ }' \ --bucket bucketname \ --fundamental "objectname" The above AWS CLI control is an example restoration asking for a single AWS Glacier stored object. There are ii additional parameters beside the location (S3 bucket & object):

- The number of days the object will be restored and made available for

- The tier switch that sets the restoration type.

AWS has 3 different tiers when making restoration requests:

- Expedited – expedited retrievals are typically made available within 1–5 hours (dependant upon provisioned capacity).

- Standard – standard retrievals typically complete within three–5 hours [DEFAULT].

- Bulk – bulk retrievals typically complete within v–12 hours.

Up-to-date Glacier retrieval request and retrieval pricing tin be found here.

Requesting restoration (two million times!)

Great, a simple fustigate script should go these restored:

for x in `glacier-restore-object list.txt` do echo "Begin restoring $x" aws s3api restore-object \ --restore-asking Days=7 \ --bucket bucketname \ --key "$x" \ repeat "Done restoring $x" Done

Merely if I wanted to look weeks for it to run…

Later a cursory trip around the cyberspace I establish I wasn't the only person always to have attempted to retrieve many files from AWS Glacier. Most people recommended using the open source python tool called s3cmd.

Simplicity itself; the bash control just requires the root S3 bucket/path and all child objects should exist restored.

s3cmd restore \ --recursive s3://bucketname \ --restore-priority=bulk \ --restore-days=7

If I accept infinite RAM, this would have been great but apparently 32Gb wasn't enough.

Okay, back to the AWS CLI only it looks like a multi-threaded approach is required if nosotros want to go this done within a reasonable timescale.

I created a Glacier directory locally with a structure as so:

glacier glacier/bucketname/ glacier/glacier-restore-object list.txt glacier/primary.sh glacier/sub.sh

Showtime nosotros need to split up the listing of objects to restore into separate files (with 2 million objects, I opted for ii,000 files with one,000 objects in each).

cd glacier/bucketname split -l one thousand ../glacier-restore-object list.txt

I'll demand a command to telephone call a sub command for each of the 2,000 files containing 1,000 objects in it's ain thread; glacier/main.sh (making executable with chmod +10 *.sh)

for f in 10* practice ~glacier/sub.sh $f & done

We volition too need a sub control to be invoked for each thread dealing with the 1,000 objects listed; glacier/sub.sh (making executable with chmod +x *.sh)

while read p; exercise echo 'aws s3api restore-object --restore-request '{"Days":7,"GlacierJobParameters":{"Tier":"Majority"}}' --bucket bucketname --key '"$p" aws s3api restore-object / --restore-request '{ / "Days" : 7, / "GlacierJobParameters" : {"Tier":"Bulk"} / }' / --bucket bucketname / --key "$p" / washed < ~glacier/bucketname/$1 We now showtime the process from the glacier/bucketname, by executing the main.sh

cd ~glacier/bucketname ./../principal.sh

I tried the above script first with the restore control commented out and with simply the echo in identify to ensure information technology was going to make the retrieval requests equally planned.

Speeding up the process

I was using a decent laptop with a native shell and copious amount of RAM; however, I didn't want to tie it up for a lengthy catamenia of time, or put my trust in my ISP in maintaining a decent connectedness.

So I opted to transport the workload out to the local resources (AWS). This would also speed up the request process significantly. By this, I meant that I stood upwards an AWS EC2 instance to run the bash script on.

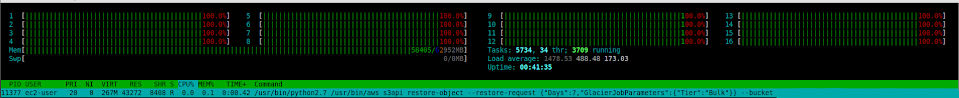

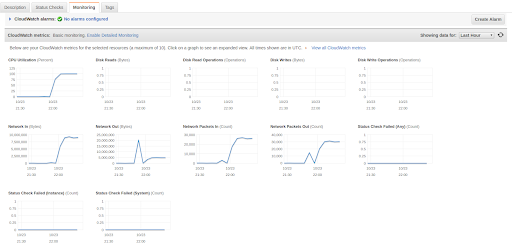

I chose to spin upwards an m5.4xlarge case (16 vCPU, 64 Gb RAM) running the Amazon Linux Os (with AWS CLI pre installed). This resulted in the restoration requests of ii million objects taking less than 24 hours. I did, notwithstanding, utilize the EC2 instance at 100% for the entire duration (efficient with a load-average over ane,400!).

On reflection, I could've chosen an case from the compute family unit type (c5.4xlarge). Every bit this would have upgraded me from a 2.5 GHz Intel Xeon® Platinum 8175 processor to a 3.0 GHz Intel Xeon Platinum processor this would take completed the chore faster.

Conclusion

The above process spanned a couple of days and came well-nigh via enquiry and trial and error. If I had to practice it all again information technology would be pretty simple – armed with the knowledge I have now.

The golden rule is to take a pre-tested strategy for retrieving your archived data, and understand the complexity and lead-time required to recover your backup data. This will enable y'all to guess an authentic RTO (recovery fourth dimension objective) for DR scenarios.

____

Richard Harper, Main Consultant, Infinity Works Manchester

Source: https://www.infinityworks.com/insights/restoring-2-million-objects-from-glacier/

0 Response to "Glacier Expedited Retrievals Are Currently Not Available Please Try Again Later"

Post a Comment